Why Suno Doesn't Follow Your Chord Progressions and Rhythm Instructions

Your chord progressions and rhythms get ignored

If you've used Suno, you've experienced this.

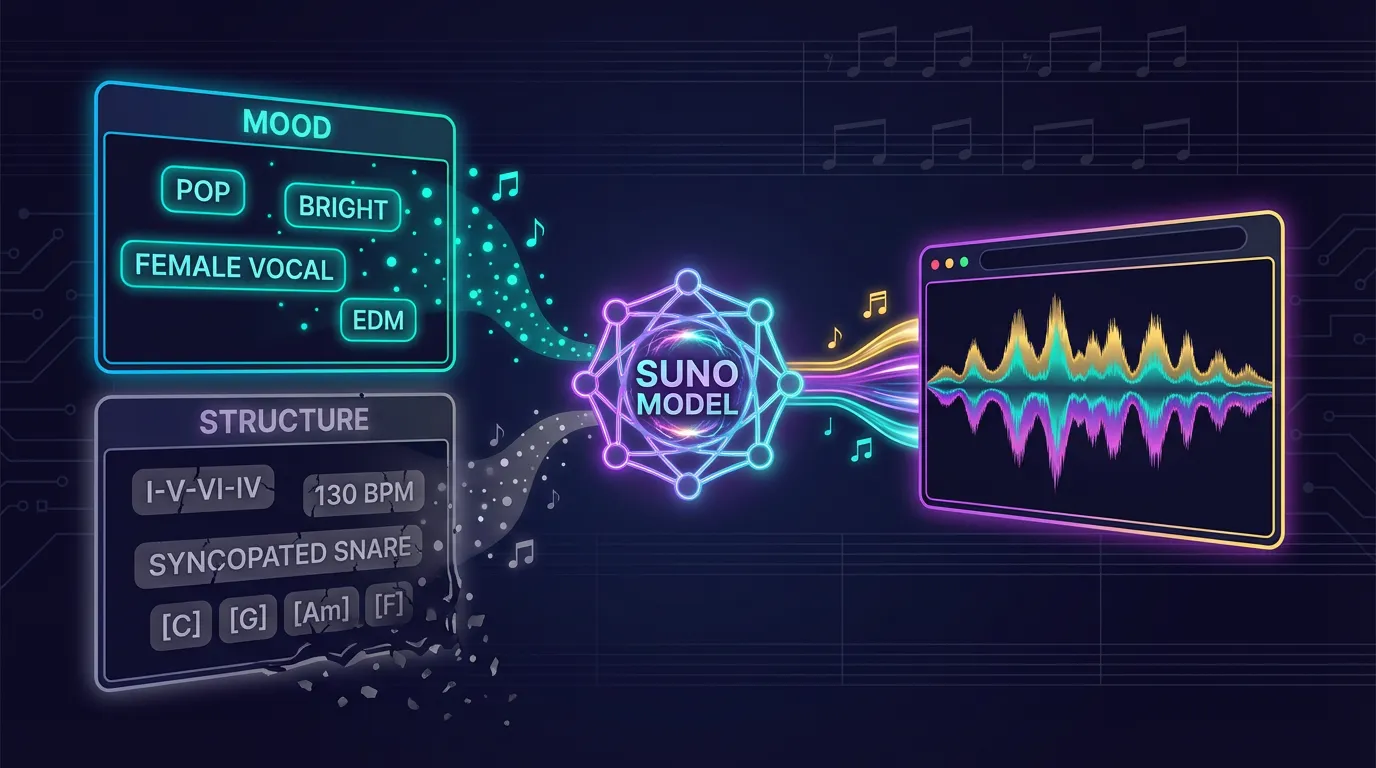

You write "C major, I-V-vi-IV progression, 130 BPM" in the prompt, and the song comes back in a different key with a different chord progression. You write "syncopated snare," and what you get is a straight backbeat on 2 and 4.

Meanwhile, "EDM, bright, female vocalist" works perfectly.

What's behind this gap?

Suno wasn't built to read chord charts or rhythm notation

Music generation AI like Suno learns from massive collections of "text description + audio" pairs. Those text descriptions are mood-based: "Pop, bright, acoustic guitar, female vocals." They are not chord charts. They are not rhythm notation.

This means Suno has learned enormous amounts of "Pop → this kind of sound" and "bright → this kind of feel." But it likely has not learned "C G Am F → this specific progression" or "snare on the and of beat 2 → this rhythm."

Put differently: a human musician can read notation and play what's written. Music generation AI hasn't been trained that way. It doesn't recognize the concept of notation and can't convert it into sound.

What text can and can't convey

This structure creates a clear divide between what text prompts reliably convey and what they don't.

Reliably conveyed: genre (Pop, EDM, Jazz), mood (bright, dark, aggressive), instrumentation (acoustic guitar, piano, synth), vocal type (female, male, rap), song structure ([Verse], [Chorus], [Bridge])

Unreliable: chord progressions (I-V-vi-IV), rhythmic detail, melody lines

The first group consists of mood descriptions that appear abundantly in training data. The second group consists of structural specifications that are largely absent from training data. The fact that this gap hasn't closed across six version updates from v3 to v5.5 suggests the issue lies not in model capability but in the structure of the training data itself.

The other limitation of text: not enough information

Even if Suno perfectly understood chord symbols, text would still fall short.

"Syncopated snare" — is that the and of beat 2? The downbeat of 4? A 16th-note ghost note? The musical information needed is simply not contained in the text.

Chord progressions are the same. Writing [C] [G] [Am] [F] doesn't specify how many beats each chord lasts, whether the voicing is low or high, or whether it's strummed or arpeggiated. There is a massive amount of information that text can't carry.

Sending an audio file solves this problem

Suno's Cover function lets you upload an audio file and rebuild the song in a different style based on that audio.

Audio physically contains both chord progressions and rhythm patterns. Chord progressions exist as "which notes are sounding simultaneously at which moment." Rhythm exists as "where the attacks land in time." Both are embedded directly in the waveform. No text pathway involved, so the limitations of text are bypassed entirely.

Audio also carries everything that text can't express. Writing all of that out as individual prompt parameters isn't realistic. With audio, a single file delivers everything at once.

Summary

When you write chord progressions or rhythm patterns in a Suno prompt and they don't stick, it's because Suno wasn't trained to read chord charts or rhythm notation. What Suno can read is mood descriptions, not structural specifications.

So the principle is simple.

Use prompts for mood. Use audio for structure.

Continuing to refine your prompts is like speaking a language Suno can't read. If you want to convey structure, send it in a form Suno can actually receive — audio.